Healthcare AI Has an Economics Problem

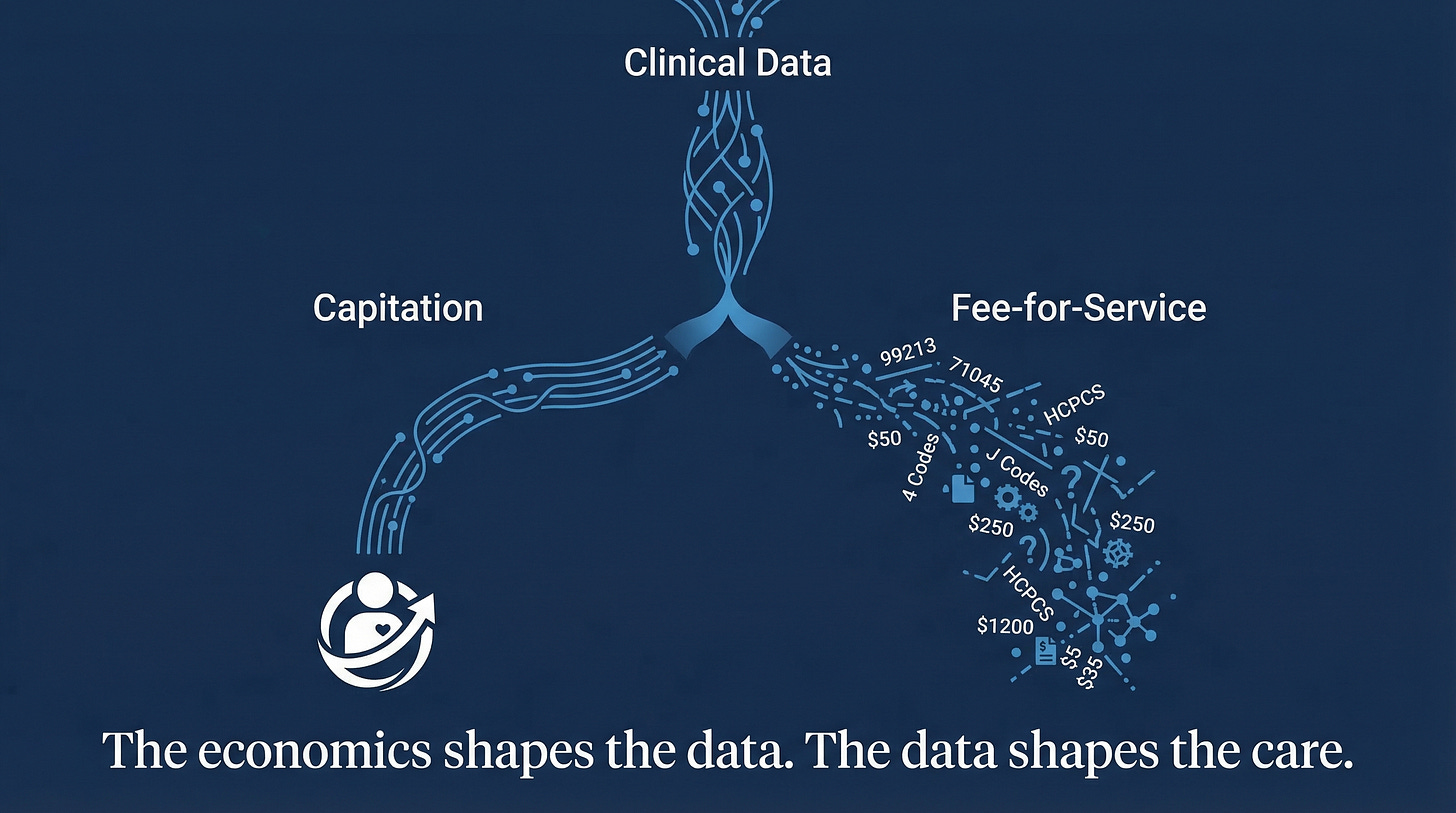

Same technology. Different payment structures. Different outcomes.

[

Two health systems deployed the same ambient AI scribe. Kaiser Permanente, which operates under capitation, ran it across 7,260 physicians over fifteen months. The result: 15,791 hours of documentation time saved, 84% of physicians reporting better patient communication.[1] Meanwhile, several fee-for-service systems adopted similar tools and saw something different. At one Texas oncology practice, documented diagnoses per encounter rose from 3.0 to 4.1.[2] At Riverside Health, physician work effort increased 11% and HCC diagnoses jumped 14%.[3]

Same technology. Different payment structures. Different outcomes.

Kaiser’s physicians had no financial incentive to upcode. The AI reduced their documentation burden, and that was the end of the story. In fee-for-service settings, the AI also reduced documentation burden, but it simultaneously captured billable complexity that physicians had previously left on the table. The tool worked exactly as designed in both cases. The economics determined what “working” meant.

A Thousand Tools, Seven Codes

The FDA has cleared over 1,000 AI-enabled medical devices, the majority in radiology.[4] Of those, as of early 2025, exactly seven have CPT reimbursement codes. Two have Category I codes, both for cardiac CT, and both exist only because their vendors funded large clinical trials to prove economic value to payers.[5]

The rest of the market has no viable path to getting paid.

This is not a regulatory failure or a technical failure. CMS has acknowledged that its practice expense methodology was not designed for AI applications.[5] The tools exist. They received regulatory approval. But the reimbursement infrastructure treats them as overhead, not as services. Hospitals that adopt them absorb the cost. The rational response is obvious, and adoption data confirms it: across billions of commercial claims from 2018 to 2023, only one AI tool, HeartFlow’s FFR-CT, accumulated more than 10,000 total claims.[6]

The Alert Nobody Follows

Clinical decision support faces a related but distinct problem. A 2024 meta-analysis of drug-drug interaction alerts found an overall override rate of 90%, with individual studies reporting rates between 58% and 96%.[7] Clinicians override alerts at these rates not because they are careless but because they are responding to a clear incentive structure: following the alert costs time and documentation, while overriding costs nothing.

Agniel, Kohane, and Weber showed why this runs deeper than fatigue. Studying 272 types of lab tests across two Boston hospitals, they found that the presence and timing of a blood draw predicted patient mortality better than the results of the test itself.[8] EHR data reflects healthcare processes, including who gets tested, when, and by whom, more than it reflects patient biology. Machine learning models trained on this data inherit those process artifacts. An algorithm optimizing on historically generated clinical data does not learn medicine. It learns the operational patterns of the institution that generated the data.[9]

Three Mechanisms

I think healthcare AI failures cluster around three economic mechanisms. Each involves a different layer of the principal-agent structure that Arrow described in 1963.[10]

1. Billing capture. Clinical documentation improvement AI is marketed as helping physicians document accurately. Its economic function is increasing case mix index and DRG payments. Risk adjustment AI is described as better understanding patient complexity. Its economic function is improving HCC coding completeness for Medicare Advantage capitation. The companies building these tools respond rationally to market incentives: in fee-for-service and managed care, measurable value flows from billing optimization. That is where the ROI math closes.[11]

Dai, Kvedar, and Polsky laid out where this leads. Ambient scribes create temporary revenue gains for early adopters. Payers recalibrate. Cigna began auto-downcoding level 4-5 E/M claims in late 2025 unless complexity was clearly justified.[3] Late adopters inherit lower baselines without the first-mover upside. This is a classic arms race, and the equilibrium is one where the technology adds cost without adding clinical value.

2. The workflow tax. Physicians already split their time roughly evenly between patients and desktop work.[12] AI tools that optimize organizational metrics, including readmission rates, throughput, and coding accuracy, typically add clicks to clinician workflows. The individual decision calculus is straightforward: use the AI recommendation and absorb additional documentation time with no compensation, or ignore it and absorb no cost and no penalty. Maliha et al. sharpened this further with the liability paradox: clinicians who follow an AI recommendation that proves wrong face potential malpractice liability, and clinicians who ignore one face the same liability if the patient is harmed.[13] Vendors bear no liability in either case.

Organizations diagnose the resulting non-adoption as ‘clinician resistance.’ The clinicians are performing risk management.

3. Split beneficiaries. A hospital invests in care coordination AI that reduces ED visits. The savings accrue to the insurer. The hospital bears the implementation cost and workflow disruption. UnitedHealth’s nH Predict algorithm illustrates the extreme version: as alleged in a class action complaint, the AI predicts the duration of post-acute care for Medicare Advantage patients and uses those predictions to limit coverage. The complaint alleges over 90% of denials are overturned on appeal, and only 0.2% of patients appeal. If those numbers hold, the business model works precisely because most people accept the denial.[14]

What Works, and Why

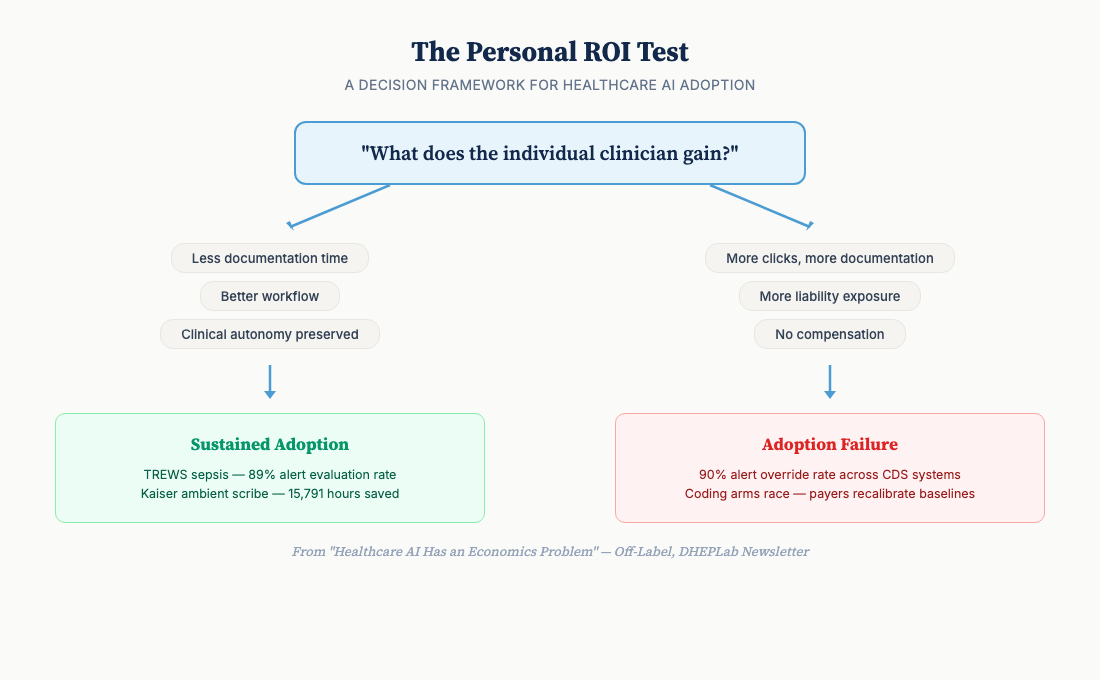

The cases where clinical AI achieves sustained adoption share a common feature. The individual user gains something.

TREWS, the sepsis early warning system at Johns Hopkins, achieved an 89% alert evaluation rate across five hospitals and 4,000 clinicians, with an 18% reduction in in-hospital mortality and antibiotics delivered nearly two hours earlier.[15] The companion implementation study explains why: TREWS provided patient-specific evidence rather than generic alerts, preserved clinician autonomy over treatment decisions, and integrated into existing workflows rather than adding new steps.[16] It passed what I consider the Personal ROI Test. The clinicians’ job became easier, not harder.

Kaiser’s ambient scribe deployment works for the same reason, amplified by payment structure. Under capitation, there is no coding arms race. The tool reduces burden. The institution captures no incremental billing revenue from the AI’s output. The physician’s incentive and the organization’s incentive point in the same direction.

Radiology AI for flagging normal studies follows the same pattern. Radiologists review fewer cases or spend more time on complex ones. Personal productivity improves. Adoption sustains.

[

The Missing Variable

The FDA has approved the technology. The models achieve impressive benchmarks: AUCs above 0.9, sensitivities above 80%, performance exceeding clinicians on narrow tasks.[17] Adoption remains low outside a few use cases.

The missing variable is not technical. It is economic. Most healthcare AI failures are misdiagnosed. The presenting symptom is technical; the underlying condition is economic. This implies that reimbursement reform, not model improvement, is the highest-leverage intervention for AI adoption.

Before deploying clinical AI, the question that matters most is not “does the model perform well?,” but “what does the individual user gain from using it?” If the answer is more work, more liability, or more documentation with no compensation, the prediction is straightforward. This is a harder design constraint than it sounds. Tools optimized for individual clinician value are harder to scale and harder to sell to procurement offices that evaluate on organizational ROI. Dranove and Garthwaite put the supply-side version of this argument directly: fee-for-service creates rents that AI threatens, and the physicians most affected have the most influence over implementation decisions.[18]

I think the field will eventually learn this, but slowly, because the people buying healthcare AI are rarely the people whose workflows it changes.

Discussion

What incentive misalignments have you observed in healthcare AI deployments? I’m particularly interested in cases where explicit incentive redesign, not just better training or change management, moved the needle on adoption.

Next issue: We go global. Low- and middle-income countries may have structural advantages for healthcare AI adoption that high-income health systems lack.

References:

-

Tierney AA, Gayre G, Hoberman B, et al. Ambient Artificial Intelligence Scribes: Learnings after 1 Year and over 2.5 Million Uses. NEJM Catalyst. 2025. DOI: 10.1056/CAT.25.0040.

-

Patel K, et al. Use of ambient AI scribing: Impact on physician administrative burden and patient care. JCO Oncology Practice. 2024;20(10_suppl):418. doi:10.1200/OP.2024.20.10_suppl.418

-

Dai T, Kvedar JC, Polsky D. Policy brief: ambient AI scribes and the coding arms race. npj Digital Medicine. 2025;8:780. doi:10.1038/s41746-025-02272-z

-

FDA. Artificial Intelligence and Machine Learning (AI/ML)-Enabled Medical Devices. Updated 2025.

-

Dogra S, Silva E III, Rajpurkar P. Reimbursement in the age of generalist radiology artificial intelligence. npj Digital Medicine. 2024. PMC11612271.

-

Bipartisan Policy Center. Paying for AI in U.S. Health Care. 2025.

-

Felisberto M, et al. Override rate of DDI alerts in CDS systems: meta-analysis. Health Informatics Journal. 2024;30(2). doi:10.1177/14604582241263242

-

Agniel D, Kohane IS, Weber GM. Biases in electronic health record data due to processes within the healthcare system: retrospective observational study. BMJ. 2018;361:k1479. doi:10.1136/bmj.k1479

-

Gianfrancesco MA, Tamang S, Yazdany J, Schmajuk G. Potential Biases in Machine Learning Algorithms Using Electronic Health Record Data. JAMA Intern Med. 2018;178(11):1544-1547. doi:10.1001/jamainternmed.2018.3763

-

Arrow K. Uncertainty and the Welfare Economics of Medical Care. Am Econ Rev. 1963;53(5):941-973.

-

Chandra A, Skinner J. Technology Growth and Expenditure Growth in Health Care. J Econ Lit. 2012;50(3):645-680.

-

Tai-Seale M, Olson CW, Li J, et al. Electronic Health Record Logs Indicate That Physicians Split Time Evenly Between Seeing Patients And Desktop Medicine. Health Aff. 2017;36(4):655-662.

-

Maliha G, Gerke S, Cohen IG, Parikh RB. Artificial Intelligence and Liability in Medicine: Balancing Safety and Innovation. Milbank Q. 2021;99(3):629-647.

-

Estate of Gene B. Lokken v. UnitedHealth Group, class action complaint (filed Nov 2023). Case allowed to proceed, Feb 2025. Reported by STAT News, CBS News, Healthcare Finance News. UnitedHealth disputes characterization.

-

Adams R, Henry KE, Sridharan A, et al. Prospective, multi-site study of patient outcomes after implementation of the TREWS machine learning-based early warning system for sepsis. Nat Med. 2022;28:1455-1460.

-

Henry KE, Kornfield R, Sridharan A, et al. Human-machine teaming is key to AI adoption: clinicians’ experiences with a deployed machine learning system. NPJ Digit Med. 2022;5:97.

-

Rajkomar A, Dean J, Kohane I. Machine Learning in Medicine. N Engl J Med. 2019;380(14):1347-1358.

-

Dranove D, Garthwaite C. Artificial Intelligence, the Evolution of the Health Care Value Chain, and the Future of the Physician. In: Agrawal A, Gans J, Goldfarb A, Tucker C, eds.The Economics of Artificial Intelligence: Health Care Challenges. University of Chicago Press; 2024:9-45.

Off-Label: Health economics for the digital age · DHEPLab Newsletter If someone forwarded this to you, subscribe here to get future issues.

Read on Substack

This post is also available on our Substack newsletter, where you can subscribe for updates.

View on Substack